On March 19, 2026, Meta Platforms dropped a major update that sent ripples through the tech and media worlds: the company is aggressively deploying advanced AI systems to handle content enforcement across Facebook, Instagram, Threads, and its other apps. Over the next few years, this will dramatically reduce its reliance on third-party vendors and human contractors who have long reviewed posts, comments, and videos for violations of community standards.

Instead of outsourcing basic moderation tasks to external firms, Meta is betting on its own AI to catch scams, graphic content, impersonation, and other harmful material faster and more accurately than ever before. For a platform that processes billions of pieces of content daily, this isn’t just an efficiency play — it’s a fundamental rewrite of how online safety works in the age of generative AI and massive scale.

Read Meta’s official announcement for the full details: Boosting Your Support and Safety on Meta’s Apps With AI.

This shift builds on years of incremental AI adoption but now accelerates it into a full replacement strategy for many routine human review roles. If you’re an Android user downloading social apps or AI tools, understanding these changes matters — it affects what content you see (or don’t see) every day. For safe, verified APK downloads of Facebook, Instagram, and Meta-related apps, check our Facebook APK mirror page or Instagram APK section.

The Old Model: Third-Party Human Reviewers at Scale

For more than a decade, Meta’s content moderation relied heavily on a hybrid system. AI handled the first pass — flagging obvious spam, nudity, or known violative images via hash-matching and basic classifiers. But the heavy lifting fell to thousands of human reviewers employed by third-party contractors such as Accenture, Cognizant, and others.

These reviewers, often working in global call centers with low pay and exposure to traumatic content, made final calls on borderline cases. At its peak in the late 2010s and early 2020s, Meta employed over 15,000 such contractors worldwide. High-profile scandals — reports of PTSD among moderators, inconsistent enforcement, and cultural blind spots — highlighted the system’s flaws. Human reviewers struggled with volume, language barriers (covering only about 80 languages at one point), and the rapid evolution of scams and hate speech tactics.

By 2025, Meta had already begun pruning parts of this model. In January 2025, the company ended its third-party fact-checking partnerships in the U.S. and shifted to a user-driven “Community Notes” system inspired by X (formerly Twitter). That move focused on misinformation labeling rather than outright removal, but it signaled a broader retreat from external human oversight.

For deeper historical context on Meta’s earlier moderation changes, see this Reuters report from January 2025.

The New AI-Driven Era: Advanced Systems Take Center Stage

Meta’s latest announcement marks the next leap. The company is rolling out “more advanced AI systems” — powered by large language models and sophisticated multimodal classifiers — that can now handle repetitive or fast-evolving tasks previously routed to human contractors.

Key capabilities highlighted in the official post include:

- Detecting and mitigating 5,000 scam attempts per day that previous review teams missed.

- Reducing user reports of the most impersonated celebrities by over 80%.

- Catching twice as much adult sexual solicitation content while cutting mistaken removals by over 60%.

- Identifying fake websites spoofing legitimate brands (e.g., a sporting goods store with suspicious pricing and addresses), driving down scam ad views by 7%.

- Preventing account takeovers by spotting unusual login patterns or profile changes in real time.

These systems don’t just scan for static patterns. They adapt to cultural nuances, subcultures, emojis, slang, code words, and rapidly changing adversarial tactics — such as new drug-selling lingo or scam formats. Language coverage jumps to 98% of people online, far beyond the previous 80-language limit.

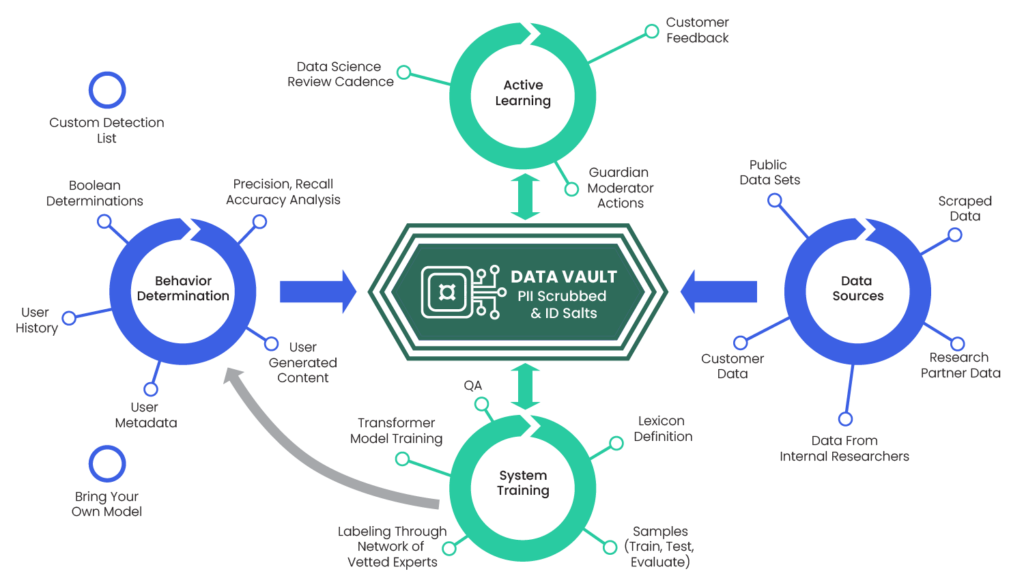

Meta’s traditional AI process (still in use alongside the new systems) starts with machine-learning models that analyze text, images, and videos. For example, models trained on millions of examples can spot nudity or graphic violence in photos. Flagged content either gets auto-removed or sent for human review. The new advanced layer adds contextual understanding via LLMs, allowing the system to evaluate intent, sarcasm, or evolving threats without constant human intervention.

As explained in Meta’s own help documentation: “AI teams start by building machine learning models that can do things such as recognise what’s in a photo or analyse the text of a post… Our technology sends content to human review teams to take a closer look and make a decision on it.” The AI learns iteratively from those human decisions. Now, that learning loop is being supercharged so fewer cases reach humans at all.

Explore how these AI technologies power modern apps in our Android AI Tools 2026 roundup, which includes safe APK downloads for Meta AI features and similar utilities.

Why the Switch? Speed, Scale, and Cost Efficiency

Meta’s reasons are straightforward and compelling. Human moderation at Meta’s scale is expensive, slow, and inconsistent. Third-party contractors introduce delays, training gaps, and regional biases. AI, by contrast, operates 24/7, scales instantly, and improves continuously with more data.

The company explicitly states it will “reduce our reliance on third-party vendors for content enforcement and focus on strengthening our internal systems and workforce.” Repetitive tasks — reviewing graphic images or chasing ever-mutating scam tactics — are now “better suited to technology.” Humans remain essential for high-stakes decisions: appeals of account bans, law-enforcement reports, and complex policy edge cases.

Early tests show the AI not only catches more violations but reduces over-enforcement errors. This aligns with Meta’s broader 2025–2026 push toward “more speech, fewer mistakes,” as CEO Mark Zuckerberg framed earlier policy shifts.

The Human Cost: What Happens to Third-Party Reviewers?

The transition won’t be painless. Third-party vendors that supplied moderators for years face shrinking contracts. While Meta hasn’t announced mass internal layoffs tied directly to this (though Reuters reported potential 20%+ workforce reductions amid broader AI cost pressures), external contractors are already seeing work dry up for routine enforcement tasks.

Industry observers expect thousands of reviewer roles to disappear globally over the multiyear rollout. Critics worry about lost jobs in developing countries where these positions provided steady (if demanding) employment. Meta counters that internal teams will grow in oversight, training, and evaluation roles — but the net effect is clear: fewer humans reviewing day-to-day content.

For a balanced view on AI’s workforce impact, see coverage from TechCrunch on the rollout.

Potential Downsides and Lingering Concerns

AI moderation isn’t perfect. Even Meta’s Oversight Board has previously criticized gaps in handling AI-generated content and contextual nuance. False positives can still silence legitimate speech — especially in non-English languages or niche communities. Adversarial actors quickly test new workarounds, requiring constant model retraining.

Bias in training data remains a risk, though Meta claims rigorous testing and safeguards. Cultural adaptation is improving, but no system fully replaces human empathy in edge cases involving satire, activism, or regional politics.

Users have reported account suspensions due to overzealous AI flags in the past; the new systems aim to cut such errors, but transparency around appeals will be critical. Meta promises better reporting tools and faster responses, but independent watchdogs will scrutinize results.

Meta’s Oversight Board has raised related issues in the past — read more in their statements on AI policy enforcement.

What This Means for Users and the Future of Social Media

For everyday users on Android or iOS, the change should mean safer feeds with fewer scams and impersonators slipping through. Appeals and support now get a boost from the new Meta AI Support Assistant, rolling out globally for password resets, account recovery, and profile issues — responding in under five seconds, 24/7.

Creators and brands benefit from reduced mistaken takedowns. But the broader societal question lingers: Can AI truly protect vulnerable groups without over-censoring? As platforms lean harder into automation, user-driven tools like Community Notes and transparent appeals become even more important.

Looking ahead, Meta plans a multiyear phased rollout, with AI handling more volume while humans focus on oversight. This model will likely influence competitors — Google, TikTok, and X are all investing in similar AI moderation tech.

On apkmirror.shop, we’ll continue tracking these developments. If you want the latest verified APKs for Meta apps or AI assistants, visit our Social Media Apps category or AI & Productivity section.

Conclusion: A New Chapter in Digital Safety

Meta’s AI-driven moderation push isn’t just replacing third-party reviewers — it’s redefining the balance between scale, safety, and speech on the world’s largest social platforms. With proven early wins in scam prevention and accuracy, the technology promises a more responsive system. Yet the human element — oversight, appeals, and ethical guardrails — remains indispensable.

As this multiyear transformation unfolds, users, creators, and regulators will watch closely. One thing is certain: the era of thousands of external human moderators making split-second calls on billions of posts is winding down. In its place rises a hybrid future where AI does the heavy lifting and humans steer the ship.

Stay informed and download apps safely. For more tech analysis and APK mirrors, bookmark apkmirror.shop/blog and follow our updates on AI, social media, and digital policy.

How Meta’s AI-Driven Moderation Is Replacing Third-Party Content Reviewers

On March 19, 2026, Meta Platforms announced a significant acceleration in its use of advanced AI systems for content enforcement across Facebook, Instagram, Threads, and other apps. Over the coming years, the company plans to dramatically reduce its dependence on third-party vendors and human contractors who have traditionally reviewed posts, comments, videos, and other content for violations of community standards.

Instead, Meta is shifting routine and repetitive moderation tasks to its own AI models, which can now detect scams, graphic violence, impersonation, adult solicitation, and other harmful material with greater speed and accuracy. For a platform handling billions of content pieces daily, this move represents not only a major efficiency gain but also a transformation in how digital safety and free expression are balanced in the generative AI era.

Read the full details in Meta’s official announcement: Boosting Your Support and Safety on Meta’s Apps With AI.

This builds on Meta’s earlier 2025 policy shifts, including the replacement of third-party fact-checkers with a user-driven Community Notes system. For Android users seeking safe downloads of Meta apps, visit our Facebook APK mirror or Instagram APK section.

The Old Model: Third-Party Human Reviewers at Scale

Meta’s content moderation historically combined automated AI flagging with thousands of human reviewers employed by external contractors like Accenture and Cognizant. At its height, this network exceeded 15,000 contractors worldwide. Humans handled nuanced or borderline cases after AI’s initial pass using hash-matching and basic classifiers.

This system faced criticism for exposing workers to traumatic content, causing inconsistent enforcement, language limitations (covering roughly 80 languages), and cultural biases. High-profile reports documented PTSD among moderators and delays in addressing evolving threats like scams and hate speech.

In January 2025, Meta ended its U.S. third-party fact-checking partnerships and adopted a Community Notes-style model, signaling a broader move away from external human oversight.

For more background: Meta’s “More Speech and Fewer Mistakes” announcement (January 2025).

The New AI-Driven Era: Advanced Systems Take Center Stage

Meta’s March 2026 update introduces “more advanced AI systems” powered by large language models (LLMs) and multimodal classifiers. These tools now handle tasks previously sent to human reviewers, including:

- Detecting 5,000+ daily scam attempts that earlier systems missed.

- Reducing impersonation of popular figures by over 80%.

- Identifying twice as much adult sexual solicitation content while cutting erroneous removals by over 60%.

- Spotting fake websites and suspicious ads, lowering scam ad views by 7%.

- Real-time prevention of account takeovers through behavioral analysis.

The new AI excels at understanding context, slang, emojis, code words, and rapidly changing adversarial tactics. Language coverage now reaches 98% of global online users. Repetitive or high-volume tasks — such as scanning graphic images or tracking drug-trafficking lingo — are increasingly automated, freeing humans for complex appeals, law-enforcement coordination, and policy oversight.

Meta’s traditional process still applies where needed: machine-learning models analyze content, with uncertain cases routed for review. The AI continuously learns from human feedback, creating a tighter improvement loop.

Learn how similar AI powers modern apps in our Android AI Tools 2026 roundup.

Why the Switch? Speed, Scale, and Cost Efficiency

Human moderation at Meta’s scale is costly, slow, and prone to inconsistency. Third-party vendors add delays and regional variances. AI operates continuously, scales effortlessly, and improves with data.

Meta states it will “reduce our reliance on third-party vendors for content enforcement and focus on strengthening our internal systems.” Humans will continue reviewing high-stakes decisions, but routine work shifts to technology. Early results show higher detection rates and fewer mistakes, aligning with the company’s “more speech, fewer mistakes” philosophy.

The Human Cost: What Happens to Third-Party Reviewers?

The transition will impact thousands of contractor roles globally as contracts shrink for routine tasks. While Meta has not detailed mass internal layoffs, external moderation work is declining. Observers anticipate a multiyear phased reduction, with internal teams expanding in oversight and training roles. Critics highlight job losses in developing regions, though Meta emphasizes AI complements rather than fully eliminates human expertise.

Potential Downsides and Lingering Concerns

Despite gains, AI moderation faces challenges:

- False positives that may suppress legitimate speech, especially in non-English languages or niche contexts.

- Bias in training data and difficulties with sarcasm, satire, or cultural nuance.

- Rapidly evolving adversarial tactics requiring constant retraining.

- Oversight of AI-generated (deepfake) content, an area where Meta’s Oversight Board has called for stronger, separate rules and better provenance labeling using standards like C2PA.

Transparency in appeals and independent auditing will be essential. Meta promises improved support tools, including its new Meta AI Support Assistant for fast account recovery.

For Oversight Board perspectives: Meta’s Oversight Board on AI-generated content rules.

What This Means for Users and the Future of Social Media

Users should experience cleaner feeds with fewer scams and impersonators. Creators may see fewer wrongful takedowns. However, the shift raises broader questions about AI’s ability to protect vulnerable communities without over-censoring.

Meta plans a multiyear rollout, with AI handling increasing volume while humans focus on governance. This approach will likely influence competitors like TikTok, YouTube, and X.

On apkmirror.shop, we track these changes closely. Download verified APKs safely from our Social Media Apps category or AI & Productivity section.

Frequently Asked Questions (FAQs)

1. Will Meta completely eliminate human content reviewers?

No. Meta will continue using human teams for complex cases, appeals, policy development, and high-severity violations. AI handles repetitive or high-volume tasks, but human oversight remains for nuanced decisions.

2. How does Meta’s new AI compare to its previous moderation system?

The advanced AI is faster, covers more languages (98% vs. ~80%), catches more violations (e.g., scams and solicitation), and reduces errors. It adapts better to evolving threats through LLMs and multimodal analysis.

3. What happened to Meta’s third-party fact-checkers?

In January 2025, Meta ended partnerships with external fact-checkers in the U.S. and introduced a Community Notes-style system where users add context to potentially misleading posts.

4. How will this affect ordinary users on Facebook or Instagram?

Most users will notice fewer scams, impersonators, and harmful content. Appeals and support should become faster via the Meta AI Assistant. However, some legitimate posts might still trigger flags, so clear appeals processes are important.

5. Is AI moderation biased?

All AI systems carry risks of bias from training data. Meta claims rigorous testing and safeguards, but critics note challenges in Global South contexts and non-English content. Ongoing improvements and Oversight Board input aim to address this.

6. When will the full transition happen?

Meta describes a multiyear phased rollout starting now. Routine tasks are shifting first, with broader automation expanding over time.

7. How does this compare to other platforms?

X (Twitter) relies heavily on Community Notes. TikTok and YouTube also invest in AI moderation but maintain significant human review. Meta’s move is among the most aggressive toward internal AI dominance.

Top Related AI Content Moderation Products & Tools in 2026

If you’re interested in AI moderation beyond Meta, here are some of the leading commercial tools and APIs powering similar capabilities for websites, apps, and enterprises in 2026:

- Hive Moderation — Comprehensive AI platform for text, image, video, and audio. Strong in detecting hate speech, nudity, and scams with customizable models.

- Amazon Rekognition — AWS service excelling at visual content moderation (images/videos). Integrates easily with cloud workflows for enterprise-scale filtering.

- OpenAI Moderation API — Lightweight, effective for text-based content. Popular for quick integration in chat apps and social features; cost-efficient for startups.

- Google Cloud Content Moderation (Vision + Natural Language) — Robust multimodal tools with excellent language support and enterprise SLAs.

- Sightengine — Specialized in visual moderation (images/videos). Fast API for detecting explicit content, weapons, and drugs.

- Mixpeek — Multimodal platform with scene-level video analysis and explainable scoring — ideal for advanced social or streaming apps.

- Besedo — Hybrid human-AI service combining automation with expert review for high-accuracy needs.

- Lasso Moderation — User-friendly tool praised for ease of use and audit logging.

Many of these tools are available as APIs or SDKs that Android developers can integrate into custom apps. For safe, verified APK downloads of related AI utilities or moderation-testing apps, browse our AI Tools category on apkmirror.shop.

Conclusion: A New Chapter in Digital Safety

Meta’s aggressive push toward AI-driven moderation is replacing much of the third-party human reviewer model with faster, more scalable technology. Early results demonstrate clear wins in scam prevention and accuracy, but challenges around bias, deepfakes, and free speech persist.

As this transformation unfolds, the hybrid model — powerful AI supported by strategic human oversight — will likely define the future of social platforms. Users, creators, and regulators will need to stay vigilant.

Bookmark apkmirror.shop/blog for ongoing coverage of AI, social media policy, and safe APK mirrors. Stay informed, download responsibly, and engage thoughtfully online.

This version flows naturally, boosts engagement with FAQs (great for SEO), and positions your site as a helpful resource with the “Top Products” section. Let me know if you’d like any tweaks, more links, or adjustments to tone/length!